Responsible AI is more than just a buzzword. It's a strategic framework for embedding fairness, transparency, accountability, and privacy directly into how you design, build, and deploy your AI systems. This isn't just a technical exercise; it's about making sure your AI aligns with your company's ethics, slashes operational risk, and builds unshakable trust with your customers and stakeholders.

Key Takeaways

- Strategic Growth, Not Compliance: Responsible AI is a competitive advantage that fuels sustainable growth by building customer trust and reducing operational risk.

- Governance is Essential: A formal AI governance framework with clear roles, principles, and policies is necessary to move from scattered experiments to scalable, aligned AI initiatives.

- Proactive Risk Management: Conducting thorough risk and impact assessments before deployment helps identify and mitigate potential harm, protecting your brand and customers.

- Data Integrity is Paramount: The quality and security of your data, combined with rigorous model validation for fairness and bias, are foundational to trustworthy AI.

- Humans are Non-Negotiable: Continuous monitoring and a human-in-the-loop (HITL) process for high-stakes decisions ensure AI remains effective, accountable, and aligned with business goals over time.

Why Responsible AI Is a Growth Strategy, Not a Roadblock

Too many leaders still see responsible AI through the narrow lens of compliance—a checkbox to tick, a cost to bear. That’s a missed opportunity. When you approach it proactively, a structured responsible AI plan becomes a powerful engine for sustainable growth and a serious competitive advantage.

The days of treating AI as a series of disconnected, siloed experiments are over. To win, you have to connect your AI initiatives directly to business outcomes. A solid framework for responsible AI delivers tangible results that go far beyond just avoiding fines.

The Shift From Experimentation To Scaled Integration

AI adoption has exploded, and governance is sprinting to catch up. Early 2026 estimates show a staggering 78% of organizations globally are using AI in at least one business function, a huge jump from just a year ago. It's a clear signal that the era of experimentation is ending and the era of scaled integration is here.

This rapid rollout amplifies the risks of unchecked AI. Think biased outcomes in AI-powered lead generation or devastating data privacy breaches. A responsible framework isn't about slowing down; it's about building guardrails so you can scale faster and more safely.

Responsible AI isn't just about risk mitigation—it's a strategic imperative that directly fuels growth. By embedding principles like fairness and transparency into your AI lifecycle, you build customer trust, sharpen decision-making, and create resilient, high-performing systems that deliver superior ROI.

Connecting Responsible AI Principles to Business Impact

This table breaks down how the core pillars of responsible AI translate directly into measurable business outcomes that every growth leader should care about.

| Responsible AI Principle | Core Objective | Business Impact Opportunity |

|---|---|---|

| Fairness & Inclusivity | Eliminate bias and ensure equitable outcomes for all user groups. | Expands your addressable market and strengthens brand reputation. |

| Transparency & Explainability | Make AI decisions understandable to humans. | Builds deep customer trust and accelerates internal adoption. |

| Accountability & Governance | Establish clear ownership and oversight for AI systems. | Reduces regulatory risk and improves operational efficiency. |

| Privacy & Security | Protect user data and secure systems from threats. | Lowers the risk of costly data breaches and enhances customer loyalty. |

| Reliability & Safety | Ensure AI performs consistently and safely as intended. | Increases ROI through better performance and fewer critical errors. |

Ultimately, a structured approach ensures every AI system you deploy is not just effective, but also trustworthy and reliable. This foundation is critical for long-term success and getting broad buy-in across the organization.

Here’s how it cashes out:

- Higher ROI: Well-governed AI models are simply more accurate. They're less prone to costly errors, leading to smarter resource allocation and better performance across the board.

- Lower Operational Risk: When you proactively assess risks, you spot potential legal, reputational, and financial landmines before they blow up.

- Stronger Customer Trust: Transparency isn't just a nice-to-have. Demonstrating your commitment to ethical AI is one of the most powerful ways to build and keep brand loyalty.

Building Your AI Governance Framework from Scratch

A powerful AI model without guardrails is like a high-performance engine with no steering wheel. It’s got a ton of potential but zero direction, making it a huge liability. This is where AI governance comes in—it’s the structure, the rules, and the accountability you need to point your AI initiatives toward your actual business goals.

Without a solid framework, AI adoption tends to devolve into a chaotic mess of disconnected projects. Each one has its own standards, its own risks, and an ROI that's anyone's guess. A governance framework pulls you out of that fragmented experimentation and into a unified, strategic approach where every AI project is transparent, accountable, and aligned with your core mission.

Defining Key Roles and Responsibilities

First things first: you have to assign clear ownership. Ambiguity is the enemy of accountability, so you need to spell out exactly who is responsible for what, from high-level strategy all the way down to the nitty-gritty of model validation.

Here are the core roles you’ll want to establish in your governance structure:

- AI Governance Committee: Think of this as the central nervous system for your AI strategy. It’s a cross-functional team with leaders from legal, IT, data science, and key business units. Their job is to set policies, review high-risk projects, and make sure everything aligns with business goals.

- Chief AI Officer (or equivalent): This is your executive sponsor, the person championing the AI vision from the top. They secure the resources, sell the value of responsible AI to the board, and hold the governance committee’s feet to the fire.

- AI Product Owners: These are the people responsible for the entire lifecycle of a specific AI application. They work hand-in-hand with the governance committee to ensure their projects stick to the ethical guidelines and risk protocols you’ve laid out.

A critical piece of this puzzle is establishing a strong system of Artificial Intelligence Governance to guide how these roles function day-to-day.

Key Takeaway: Your AI governance framework can't be cooked up in a vacuum by the data science team. It has to be a collaborative effort, pulling in diverse perspectives from across the business to make sure the policies are not only effective but also practical enough to actually implement.

Practical Example: Governance in Action

Imagine a manufacturing company using an AI-powered CRM to score leads. The governance committee might include the VP of Sales, the Head of IT, a data scientist, and someone from the legal team. They’d be on the hook for defining what "fairness" actually means for their lead scoring model and setting up a clear process for the sales team to appeal if the AI kicks out a promising lead. That’s the kind of real-world oversight that makes for a successful responsible AI implementation.

For a deeper dive, you might want to explore our guide on comprehensive AI enablement services to see how this all fits into a broader business strategy.

To help you get started, here’s a practical checklist of the essential components you'll need to build out your own AI governance framework.

AI Governance Framework Components

| Component | Key Actions | Ownership Example |

|---|---|---|

| AI Principles | Define and publish high-level ethical commitments (e.g., fairness, transparency). | AI Governance Committee |

| Policies & Standards | Create detailed, actionable rules for AI development, data handling, and deployment. | Legal & Compliance, Head of AI |

| Roles & Responsibilities | Clearly map out who owns what, from strategic oversight to model validation. | Chief AI Officer, Department Heads |

| Risk Assessment | Develop a standardized process to identify, measure, and mitigate AI-related risks. | Risk Management, AI Product Owners |

| Model Validation | Mandate technical and business validation before any model goes into production. | Data Science Lead, Business Unit Leader |

| Monitoring & Reporting | Establish KPIs to track model performance, bias, and business impact post-deployment. | MLOps Team, AI Product Owners |

| Training & Communication | Educate all relevant employees on AI policies, ethical guidelines, and their roles. | HR, AI Governance Committee |

This table isn't just a theoretical exercise; it’s a blueprint. Use it to ensure you cover all your bases and assign real names to each of these crucial functions.

Crafting Your AI Principles and Policies

Once the roles are set, it’s time to codify your organization's stance on AI. Think of your AI principles as your north star—a set of high-level ethical commitments that guide every single AI project. They should be simple, memorable, and a direct reflection of your company's core values.

Common principles usually include commitments to:

- Fairness: Actively work to find and fix harmful bias in AI systems.

- Transparency: Make AI decision-making as understandable as it can be.

- Accountability: Ensure a human is ultimately responsible for what the AI does.

- Privacy: Protect user and company data with the highest security standards.

These high-level principles then feed into your specific AI policies, which are the detailed, actionable rules for day-to-day work. For example, a policy that flows from your "Transparency" principle might require any customer-facing AI to clearly state that the user is interacting with an AI, not a person.

Aligning Governance with Business Strategy

AI governance is no longer just a "nice-to-have" compliance checkbox; it's a strategic imperative. By 2026, 77% of organizations are expected to have dedicated programs designed to align their AI investments with business goals and manage the associated risks.

While 70% of leaders feel ready to put AI principles into practice, only 23% have a corporate-wide AI strategy in place. That number is up significantly from the previous year, which shows just how quickly companies are maturing in this space.

This data highlights a critical point: your governance framework has to directly support your business objectives. If your goal is to break into a new market, your AI governance needs to include processes for vetting the unique regulatory and ethical rules of that region. If you're trying to boost operational efficiency, your framework should prioritize AI projects that offer the clearest path to automation with the most manageable risk.

Impact Opportunity

A strong governance framework is the engine of scalable AI. By aligning every AI initiative with core business objectives and establishing clear ownership, you move beyond isolated experiments. This strategic alignment accelerates time-to-value, reduces redundant efforts, and ensures that your AI investments are consistently driving measurable growth and innovation across the entire organization.

Conduct AI Risk and Impact Assessments

Before you even think about weaving an AI system into your operations, you have to understand its potential fallout. This isn’t just good practice—it's your first line of defense. A solid risk and impact assessment helps you spot potential harm before it can hurt your brand, tick off customers, or land you in legal hot water.

This isn't about checking a box after the fact. It's about pulling risk management into the core of your development cycle and asking the tough questions upfront. What could go wrong? Who gets hurt if it does? And how are we going to stop it?

Stages of a Comprehensive Assessment

A real assessment is more than just a technical once-over. You need a 360-degree view that takes into account ethical tripwires, data privacy landmines, and the real-world impact on actual human beings. The entire point is to systematically uncover the blind spots in your design and rollout plans.

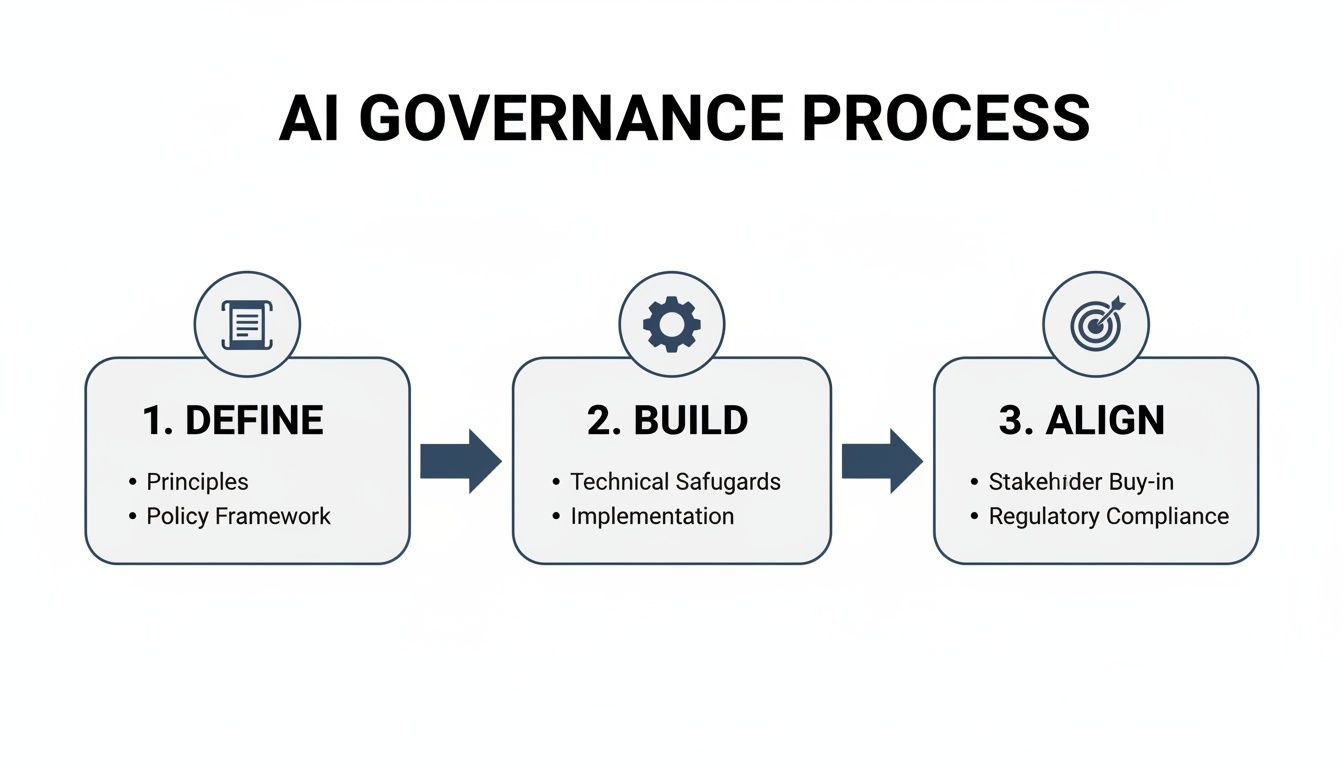

This process is a key part of a much larger governance framework.

The diagram shows how defining your principles, building the system, and aligning it with business goals are all connected. Risk assessment is the glue holding the "Build" and "Align" phases together.

A structured approach usually breaks down into a few key stages:

- Identification: Get everyone in a room (virtual or otherwise) and brainstorm every conceivable risk. Think technical, ethical, operational, and societal. No idea is a bad idea at this stage.

- Analysis: Now, you prioritize. Figure out the likelihood and potential damage of each risk. A typo in a UI is not the same as a model spitting out discriminatory loan decisions.

- Mitigation: This is where you develop concrete, actionable plans to reduce, transfer, or consciously accept each major risk.

- Monitoring: Once the AI is live, set up a system to keep an eye on your mitigation strategies. Are they working? Do they need to be adjusted?

Breaking the assessment down like this gives you a repeatable playbook you can use for any AI project, whether it's a small internal tool or a massive customer-facing platform.

Practical Example: Assessing a Customer Service AI Agent

Let’s make this real. Imagine your company is building an AI chatbot to handle basic customer service questions. The goal is to free up your human agents for more complex, high-touch problems.

Even for a seemingly simple tool like this, the risk assessment needs to be thorough.

Potential Risks to Evaluate:

- Data Privacy Violations: Is the chatbot collecting names, emails, or other personal info? If so, how is it stored and secured? A breach here isn't just an "oops"—it's a trust-shattering event with massive fines.

- Model Fairness and Bias: Was the training data pulled from a diverse set of customer chats? If not, you might have a model that can't understand certain accents or dialects, leading to frustrated users and accusations of bias.

- Inaccurate or Harmful Responses: What happens when the bot misunderstands a question and gives the wrong price, a faulty product instruction, or bad policy advice? This could cost your customers money or even cause physical harm.

- Job Displacement Anxiety: How does your existing support team feel about this? Without a clear plan for communication and retraining, you're looking at a serious morale problem and internal resistance.

The whole point of an impact assessment is to make potential disasters visible before they happen. It turns abstract fears into concrete problems you can actually solve with better design, smarter data governance, and clear protocols.

Turning Assessment into Action

Once the risks are on the table, you build a mitigation plan. For our chatbot, that could mean adding data anonymization, running regular bias audits, and building a solid "human-in-the-loop" escalation path for when the bot gets stuck. To get a better sense of your company's readiness for projects like this, it’s worth evaluating your internal AI Quotient.

This systematic process is where the real value lies.

Impact Opportunity

A well-run risk assessment is your best tool for protecting your brand and staying on the right side of regulators. When you proactively find and fix potential harms—from data breaches to biased outcomes—you create systems that are not only powerful but also trustworthy. This builds deep customer loyalty, slashes the risk of costly penalties, and cements your reputation as a responsible leader.

Protecting Data and Ensuring Model Integrity

Great AI runs on great data, but that data is also one of your biggest sources of risk. A truly responsible AI strategy comes down to two things: fiercely protecting the data you use for training and being relentless about validating your models.

If you don't nail both, you're not just building ineffective tools—you're building potentially harmful ones. This is about more than just running technical checks. It requires a genuine commitment to data privacy and a proactive plan to find and fix bias before it ever sees the light of day.

Best Practices for Responsible Data Management

The integrity of any model is a direct reflection of the data it was trained on. Garbage in, garbage out isn't just a saying; it's a fundamental truth. You need clear, established rules for how data is collected, managed, and stored long before it gets anywhere near an algorithm.

Here's where to focus:

- Ethical Data Sourcing: First rule: get explicit consent. Make sure you have a clear, stated purpose for every piece of data you collect. If you’re buying data from a third party, dig into their collection methods. You need to be sure they meet your ethical standards and comply with regulations like GDPR.

- Privacy-Preserving Techniques: Implement methods like data anonymization or pseudonymization right away. The goal is to strip all personally identifiable information (PII) from your training datasets. This simple step drastically reduces the fallout if a data breach ever occurs.

- Secure Data Storage and Access: Use encrypted storage and lock down access. Not everyone needs to see raw, sensitive data. Create strict access controls and make sure you have an audit trail for every single data-related activity.

When you're trying to keep proprietary data secure, tools like a private instance of ChatGPT for business can give you the power of generative AI without exposing sensitive information.

Validating Models for Fairness and Robustness

With your data house in order, the spotlight turns to the model itself. Model validation isn’t just about checking for accuracy. It’s about a deep, critical search for hidden biases and a test of how the model will hold up in the messy, unpredictable real world.

Practical Example: Uncovering Bias in Lead Scoring

Think about a B2B company using AI to score marketing leads. Maybe their historical data shows that their best customers came from a specific industry. But was that because those leads were truly better, or was it just because that’s where the marketing team spent all its time and money?

If you train an AI on that biased data without proper validation, it will just learn to repeat history. It will double down on the old industry and ignore high-potential leads from new sectors, leaving a ton of money on the table.

To catch this, your validation process has to include specific fairness tests. Check if the model performs equally well across different customer segments—like industry, company size, or geography. A big drop in accuracy for any single group is a massive red flag. Fix it before you deploy.

Impact Opportunity

Rigorous model validation is your best defense against costly mistakes and reputational hits. When you systematically test for bias and fairness, you build real trust with your customers and stakeholders. You end up with AI that delivers more accurate, equitable outcomes instead of just reinforcing old patterns.

The Critical Role of a Human-in-the-Loop

Even the most well-built, thoroughly tested AI model will get things wrong. That’s why a human-in-the-loop (HITL) process is non-negotiable for any high-stakes decision. This isn’t about micromanaging your AI; it's about smart, strategic oversight.

Let’s go back to our B2B marketing example. A smart HITL process would work like this:

- AI Recommendation: The model scores all new leads and flags the top 20% as "high-priority."

- Human Review: A sales rep then looks over that list. They bring their own experience and intuition to the table, spotting a promising lead the AI might have underrated or flagging a dud the AI got wrong.

- Final Decision: The rep makes the final call on who to contact. This not only improves the immediate outcome but also generates valuable feedback that can be used to retrain and sharpen the model over time.

This blend of machine speed and human judgment creates a system that’s smarter and more reliable than either could ever be alone. It’s a core ingredient in any mature and responsible AI program.

Managing AI Deployment Monitoring and Change

Launching an AI system isn't crossing a finish line; it’s stepping onto a moving treadmill. A truly responsible AI implementation lives and breathes long after deployment day. It demands constant monitoring and thoughtful change management to make sure the tech is actually helping, not quietly creating new headaches.

This is where the real work begins. Your shiny new model is about to meet real-world data—and real-world data is messy, unpredictable, and always changing. Without a watchful eye, even the most accurate algorithm can degrade, drift, or develop biases you never saw coming.

Monitoring Model Performance and Business Impact

Once an AI tool goes live, you need a solid framework for tracking its performance against both its technical promises and its business purpose. This means looking at two things at once: the model's health and its real-world value. Just checking for accuracy is like judging a car's performance by only looking at the speedometer—you're missing the bigger picture.

To do this right, you need a clear set of Key Performance Indicators (KPIs) built specifically for your use case. These metrics give you the hard data you need to prove the AI is still effective and worth the investment.

You'll want to track two kinds of KPIs:

Model Performance KPIs: These tell you if the AI is still technically sound.

- Accuracy and Error Rate: How often is the model right? Is its error rate slowly creeping up?

- Prediction Drift: Is the model's output changing over time? This can be a huge red flag that the world has changed since the model was trained.

- Data Drift: Is the input data different now? Maybe a new customer segment has appeared that the model has never seen before.

Business Impact KPIs: These connect the dots between the model and your bottom line.

- Return on Investment (ROI): Is the AI actually saving money, making money, or creating efficiencies that justify its cost?

- Adoption Rate: Are people actually using the tool? Or are they finding workarounds and sticking to the old way of doing things?

- Human Override Frequency: How often do your experts have to step in and correct the AI’s decision? A high override rate might point to a lack of trust or a drop in accuracy.

Key Takeaway

Effective AI monitoring isn't passive. You have to actively track both technical model health (like accuracy and drift) and its direct business impact (like ROI and user adoption). This gives you the full story of whether your AI is delivering real, sustainable value.

Practical Example: Monitoring a Forecasting Tool

Let's say a logistics company rolls out an AI-powered tool to forecast delivery demand, aiming to optimize driver schedules and slash fuel costs. At launch, the model hits 95% accuracy, and the company quickly sees a 15% reduction in fuel consumption. A huge win.

But it’s their monitoring protocol that keeps the wins coming.

- Daily Performance Dashboard: The ops team keeps an eye on a dashboard that tracks prediction drift. One week, they notice the model is consistently underestimating demand in one specific city.

- Human Intervention Protocol: Their rules say a regional manager must review any forecast that's more than 20% off from the historical average. The manager digs in and discovers a massive new distribution center just opened nearby—something the original training data knew nothing about.

- Feedback Loop: This crucial insight gets sent straight back to the data science team. They retrain the model with the new data, and just like that, its accuracy is restored, and a potential wave of delivery delays is avoided.

This active monitoring loop stopped a small data issue from snowballing into a major operational failure.

Navigating the Human Side of AI Adoption

The best technology in the world is useless if your team doesn't understand it, trust it, and actually use it. Change management isn't just a buzzword here; it's a critical part of a responsible AI rollout. The goal is to weave the technology into your operations, not just drop it on top of them.

You want to build a culture where AI is seen as a helpful partner—a tool that handles the grunt work so your people can focus on what they do best. This takes clear communication, good training, and an environment where people feel safe to ask questions.

Here are a few strategies that actually work:

- Communicate the "Why": Don't just announce a new tool is coming. Explain the problem it solves and, crucially, answer the "What's in it for me?" question for your employees. Frame it as a way to get rid of their most tedious tasks so they can focus on more interesting, high-impact work.

- Provide Role-Specific Training: Generic training is a waste of time. Show each team exactly how the AI changes their daily workflow. For the sales team, that might mean a demo of how an AI lead scorer helps them find the hottest prospects first.

- Create AI Champions: Find the enthusiasts and early adopters on each team. Give them a little extra training and enable them to be the go-to person for their colleagues. This kind of ground-up support builds momentum way faster than any top-down mandate.

- Establish Feedback Channels: Make it incredibly easy for people to report bugs, ask questions, or suggest improvements. When people feel like they have a voice, they’re far more likely to get on board with the change.

Impact Opportunity

By investing in continuous monitoring and smart change management, you turn your AI from a fragile, high-maintenance project into a resilient system that actually gets better over time. This proactive approach keeps your tech aligned with your business goals, delivers a consistent ROI, and helps it become a natural part of how your company works—creating a real, lasting advantage.

Answering Your Key Questions About Responsible AI

As you start to put your AI principles into practice, you're going to have questions. That's a given. Business leaders often hit the same roadblocks when it comes to resources, measurement, and managing outside vendors. Here are some direct answers to those common sticking points to help you navigate the real-world challenges of building a responsible AI program.

How Do We Start with Responsible AI if We Have Limited Resources?

Forget the idea that you need a massive budget. Starting small isn’t just an option—it’s the best way to begin. The key is to focus your energy on a single, high-impact project that also happens to be low-risk.

Practical Example: A Pilot Project

Think about an internal tool. Maybe it’s an AI agent that summarizes sales call notes for your CRM or one that helps categorize support tickets. A project like this gives you a safe, contained environment to start building your governance "muscle" without exposing the business to major risk.

Pull together a small, cross-functional team. Grab someone from sales, someone from IT, and maybe a lawyer to act as a pilot governance committee. Use this first project to hammer out a lightweight version of your AI principles and a simple risk assessment checklist. The lessons you learn here will be gold when you’re ready to build a framework for more complex, customer-facing systems.

Key Takeaway: The goal is to build the right habits on a manageable scale. By proving the value and process with a small, internal win, you build momentum and make a stronger business case for broader investment in responsible AI.

What Are the Most Critical KPIs to Track for Responsible AI?

Your standard business metrics like ROI aren't enough here. Responsible AI demands its own scorecard. To get a complete picture, you need to group your metrics into three distinct but connected areas. This ensures you're measuring not just if the AI is working, but if it's working correctly and ethically.

- Model Performance and Fairness: This is the technical bedrock. You absolutely have to track model accuracy, watch for prediction drift over time, and run regular fairness audits to check for demographic parity or other biases that matter for your use case.

- Operational Risk: These KPIs tell you how stable and compliant the system is in the wild. Key metrics include the rate of model-driven errors, the frequency of human overrides (a high rate can signal low trust or poor performance), and, of course, adherence to data privacy compliance standards.

- Business and Ethical Impact: This is where the tech meets your people and principles. Measure things like employee adoption rates, track changes in customer trust scores through surveys, and regularly score the system's alignment with your company's stated AI ethics principles.

Tracking these KPIs together gives you a complete dashboard. It's a clear, data-backed view of whether your AI is truly responsible in practice.

How Can We Ensure Third-Party AI Tools Are Compliant?

Bringing in third-party AI tools can create a massive blind spot. You can't just assume a vendor's default settings align with your company's values or regulatory needs. You have to do your homework—it's non-negotiable.

First, build your responsible AI principles directly into your procurement process. Create a standardized vendor questionnaire with pointed questions about their data handling protocols, bias mitigation techniques, model transparency, and internal governance. Their answers (or lack of them) will tell you a lot.

Second, your contracts need explicit clauses. Make sure they cover data ownership, security standards, and your right to audit their systems or performance data. Finally, set up a formal internal process for reviewing the performance and ethical alignment of every vendor tool, just like you would for your own. Treat vendor AI as an extension of your own systems, because that's what it is.

What Is the Role of a Human-in-the-Loop and When Is It Necessary?

A human-in-the-loop (HITL) system is simply a process that requires human oversight at a critical decision point. It’s not about micromanaging the AI; it’s about embedding accountability right where the stakes are highest.

HITL is absolutely essential in any scenario where an autonomous AI decision could have significant financial, legal, reputational, or ethical consequences. You use AI for speed and scale but reserve final judgment for a human expert.

Practical Examples of Human-in-the-Loop

- Lead Disqualification: An AI can churn through thousands of leads and flag which ones to disqualify based on your criteria, saving the team countless hours. But a sales manager gets the final say, using their gut instinct to rescue a high-potential lead the AI might have missed.

- Fraud Detection: An AI can flag potentially fraudulent transactions in real-time, way faster than any human could. A trained fraud analyst, however, is the one who investigates the flagged activity before freezing an account or contacting a customer.

This partnership between human and machine plays to the strengths of both, creating a system that is far more strong and reliable.

Impact Opportunity

Implementing a human-in-the-loop process for high-stakes AI decisions is a powerful strategy for risk mitigation. It builds trust both internally with employees and externally with customers by ensuring that critical outcomes are vetted by human experts. This approach not only prevents costly errors but also creates a valuable feedback mechanism to continuously improve the AI model's performance and reliability.

Ready to turn your AI ambitions into a scalable growth system? At Prometheus Agency, we partner with business leaders to build responsible, high-ROI AI solutions that deliver measurable results. Start with our complimentary Growth Audit and AI strategy session to map your path forward. Learn more and book your session at prometheusagency.co.