An AI Readiness Assessment for Teams is a structured look at how prepared your team actually is to use artificial intelligence effectively. It's more than just fiddling with AI tools on an ad-hoc basis. This is about creating a clear framework to measure everything from skills and data quality to your internal processes and culture. The goal is to make sure your AI investments lead to real business results, not just wasted time and money.

A free and simple way to get a baseline understanding is to use a tool like the AI Quotient from Prometheus Agency, which helps quantify your readiness across key business functions.

Why Your Team Needs an AI Readiness Assessment

Jumping into AI without a plan is a recipe for disappointment. Many teams are using AI tools, but very few are set up to get scalable, long-term value from them. This creates a huge gap between simple AI adoption (using a few cool tools) and genuine AI readiness (strategically weaving AI into your core workflows to get measurable results). A formal assessment isn't optional; it’s the first real step in turning AI excitement into a serious competitive advantage.

Key Takeaways

- Adoption vs. Readiness: Using AI tools is easy; being strategically ready to scale their impact is hard.

- Data-Driven Decisions: An assessment replaces guesswork with objective data, showing you exactly where to invest your resources for the biggest impact.

- From Random to Strategic: It shifts your team from "random acts of AI" to a coordinated strategy that aligns with core business goals.

- Risk Mitigation: A proper assessment identifies potential roadblocks in data, skills, and culture before they derail a major project.

The Growing Gap Between Adoption and Readiness

AI tools have spread like wildfire, far faster than the strategic planning needed to support them. The latest numbers from Stanford HAI’s AI Index are telling: 78% of organizations say they use AI, a huge jump from 55% the year before. But here's the catch—widespread use doesn't mean deep integration.

McKinsey’s research backs this up. They found that while nearly nine out of ten organizations are using AI, most haven't embedded it deeply enough into their workflows to see enterprise-level value. This is the problem so many teams are facing: they're using AI, but they aren't ready for it.

Moving from Random Acts of AI to a Strategic Framework

Without a formal assessment, teams usually fall into a pattern of "random acts of AI." Individual team members use different tools without any cohesive strategy. This might create small pockets of efficiency, but it does nothing to improve the system as a whole.

A proper AI readiness assessment gives you a clear, objective starting point. It helps you see the full picture:

- Skill Gaps: Where does your team really need training?

- Data Integrity: Is your data clean, accessible, and actually usable for AI models?

- Process Bottlenecks: Which of your old workflows are holding you back?

- Cultural Hurdles: Is your team culture truly open to experimenting and changing how they work?

This type of diagnostic helps quantify your readiness across key business functions, turning vague feelings into hard data. When you answer these kinds of questions honestly, you can pinpoint exactly where your AI strategy is solid and where it needs immediate attention. That's how you make sure your investments start generating a real return.

The Six Pillars of AI Readiness

A successful AI strategy is about so much more than just fancy new tech. An AI Readiness Assessment for Teams needs to be a 360-degree look at your organization, digging into the interconnected pillars that actually support real, lasting change. If you ignore any one of these areas, you're creating a weak link that can tank your entire effort.

Let's break down the six fundamental dimensions every team has to evaluate. Think of this as the framework for figuring out your true starting point—uncovering both hidden strengths and critical vulnerabilities.

Skills and Talent

This goes way beyond just having a data scientist on payroll. It’s about your team's collective ability to actually understand, use, and work with AI tools in their day-to-day jobs. A team that's truly ready has a healthy mix of deep technical expertise and practical, on-the-ground application skills.

Practical Example: Can your marketing team use prompt engineering to get better campaign ideas? Does your sales team know how to interpret the output from an AI-powered lead scoring model? A major skills gap is one of the fastest ways for an exciting AI project to fizzle out.

Data Infrastructure

Data is the fuel for any meaningful AI application. Without clean, accessible, and relevant data, even the most sophisticated models will produce garbage. This pillar is all about assessing the quality, availability, and management of your data.

Practical Example: A sales team tries to use AI to predict which customers are about to churn. If their CRM data is a mess—full of duplicates, missing fields, and inconsistent entries—the AI's predictions will be useless. Readiness here means having a "single source of truth" and solid processes for keeping your data clean.

Tools and Technology

This is often where teams want to start, but you have to look at it in the context of everything else. Your tech stack should be an enabler, not a roadblock. This includes everything from your core CRM and marketing platforms to the specific AI tools you're looking to bring in.

A few key questions to ask yourself:

- Do our current systems have APIs that will play nicely with new AI tools?

- Can our IT infrastructure actually handle the increased data processing load?

- Are we picking a tool because it solves a real business problem, or are we just chasing a shiny new object?

Practical Example: A classic mistake is adopting a powerful new tool without thinking through how it fits into existing workflows. The result? Low adoption and a completely wasted investment.

Internal Processes

AI doesn't work in a bubble. It has to be woven into the very fabric of how you operate. This dimension is about looking at your current workflows to see if they’re agile enough to handle AI-driven changes.

Practical Example: If your content creation process has a dozen manual approval steps, just throwing an AI writing assistant at it won't magically make things faster. The process itself is the bottleneck. A ready organization is one that actively looks for ways to redesign workflows around AI, not just slap a layer of AI on top of outdated methods. Our guide to strategic AI enablement digs much deeper into aligning tech and process for the biggest wins.

Governance and Ethics

As AI gets more embedded in business decisions, having a clear governance framework is non-negotiable. This means setting clear rules around data privacy, model transparency, and the ethical use of AI. Skip this, and you’re exposing your business to serious legal, financial, and reputational risks.

Practical Example: This is where you define who is accountable for AI-driven outcomes, how you're protecting customer data, and how you'll ensure your models are fair and unbiased. Getting ahead of this builds trust—both with your team and your customers.

Organizational Culture

Finally, and perhaps most importantly, you have to look at your culture. A culture built on fear, resistance to change, or perfectionism will kill AI innovation. An AI-ready culture is one that embraces experimentation, values constant learning, and isn't afraid of a few small, controlled failures.

Practical Example: Does your leadership team actually encourage people to test new AI tools? Is it safe for someone to raise their hand and say an AI experiment didn't pan out? If your team punishes failure, nobody will take the risks required to find those breakthrough AI applications. You'll know you're truly ready when learning from a failed pilot is seen as a win.

Building Your Assessment Framework

With the six core dimensions in mind, it's time to get practical. Let's move from theory to action and build your team's AI readiness assessment. This isn’t just about making a checklist; it's about creating a tool that gathers real, objective data to give you a clear-eyed view of where you stand today. A solid framework doesn't have to be complicated. The goal is to create something repeatable and measurable that actually fits your business.

Nail Down Your Goals and Scope

Before you draft a single question, you have to know exactly what you’re trying to accomplish. Are you checking readiness for one specific AI-powered sales tool? Or are you looking at the entire marketing department's ability to run AI-driven campaigns? A tight scope is your best friend here.

Start by answering a few non-negotiable questions:

- What specific business problem will AI solve? (e.g., slash customer response times, filter for better leads, kill manual reporting).

- Which team or department are we focusing on? (e.g., the customer support team, the B2B sales division).

- What does a win look like after we implement AI? (e.g., a 20% drop in manual data entry, a 15% boost in qualified leads).

Answering these up front will shape every other decision you make.

Craft Questions That Are Specific to Each Role

Generic questions get you generic answers. The real power of a team-based AI assessment is in its specificity. You need to frame your questions around both the six dimensions and the unique jobs people do every day. To really dig in and collect meaningful data, looking into essential user research methods can give you a great playbook for structuring your interviews and surveys.

Practical Examples of Role-Specific Questions

- For a Sales Leader: “On a scale of 1-5, how confident are you that our CRM data is clean enough to train an AI lead-scoring model without sending us on a wild goose chase?”

- For a Marketing Ops Specialist: “How much time are you personally spending on segmenting email lists, and where do you see a clear shot for AI to automate that work?”

- For a Customer Support Rep: “How often do you have to dig through our knowledge base for answers? Is it good enough to actually power a helpful AI chatbot, or would it just frustrate customers?”

- For an IT Manager: “Do our current systems have open APIs? I need to know if we can plug in third-party AI tools without a massive headache.”

See how each question is tied to a real-world task? This pushes people to give you concrete answers, not just vague opinions.

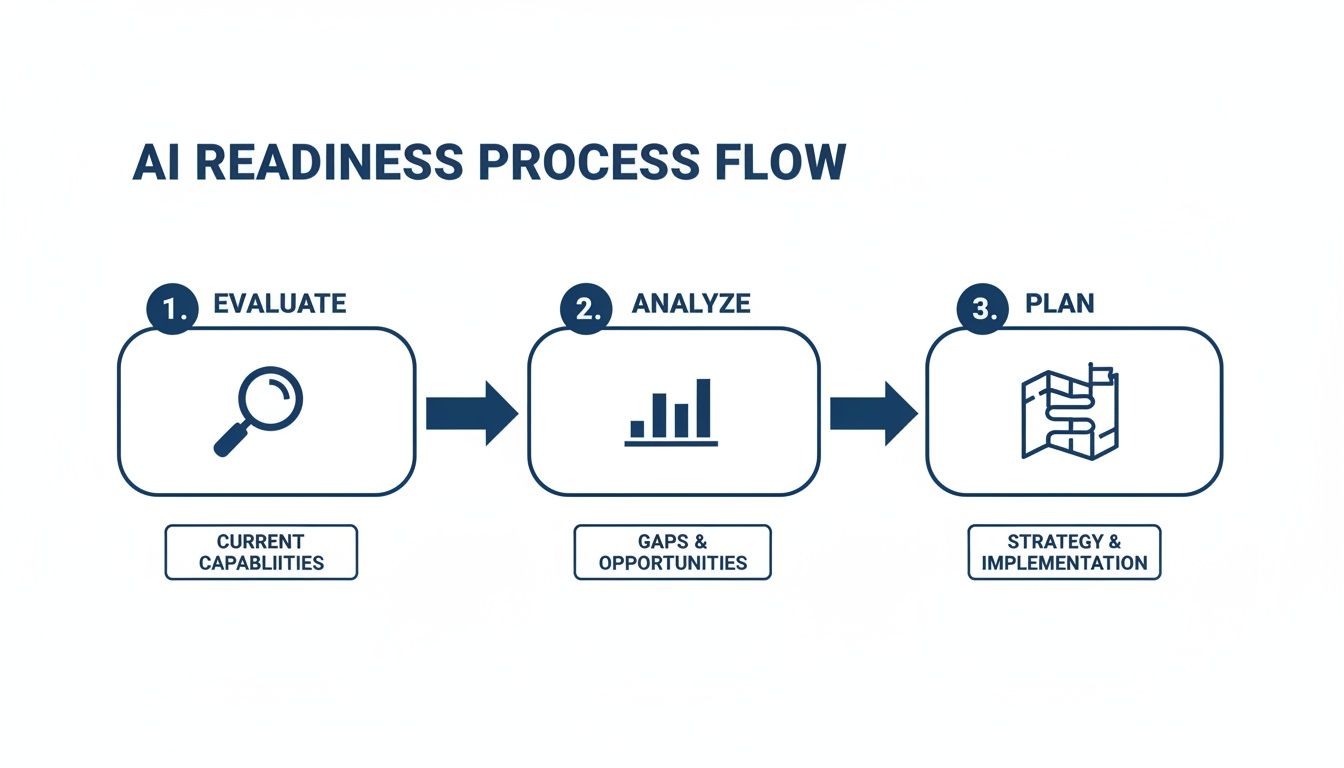

It’s a straightforward, three-step model—Evaluate, Analyze, Plan—that provides a clear path for turning all that data you collect into an actual strategic roadmap.

Set Up a Simple Scoring Model

To keep things objective and easy to compare, use a scoring model. This is how you turn all that qualitative feedback into hard numbers, which is way more effective for spotting trends and making your case to leadership. A simple 1-5 scale usually does the trick:

- 1 - Not Ready: Major gaps. This is a roadblock to adoption.

- 2 - Minimally Ready: Some basics are there, but a lot of work is needed.

- 3 - Moderately Ready: Foundations are in place, with clear strengths and weaknesses.

- 4 - Mostly Ready: We're in good shape, with only minor things to fix.

- 5 - Fully Ready: The team is good to go and can adopt AI with no friction.

Once you assign a score to each answer, you can average them out for each of the six dimensions (Skills, Data, Tools, etc.). This gives you an instant snapshot of where you’re strong and where you’re most vulnerable.

Turning Your Assessment into a Strategic Roadmap

You’ve gathered the scores, you’ve done the interviews, and now you have a pile of data. This is where the real work begins—translating those raw numbers and notes into a clear, prioritized action plan. An AI readiness assessment for teams is only as good as the decisions it helps you make. It all starts with a practical gap analysis. Look at each of the six dimensions and compare your current state (your scores) to where you need to be.

Getting a Clear Picture of Your Team's Readiness

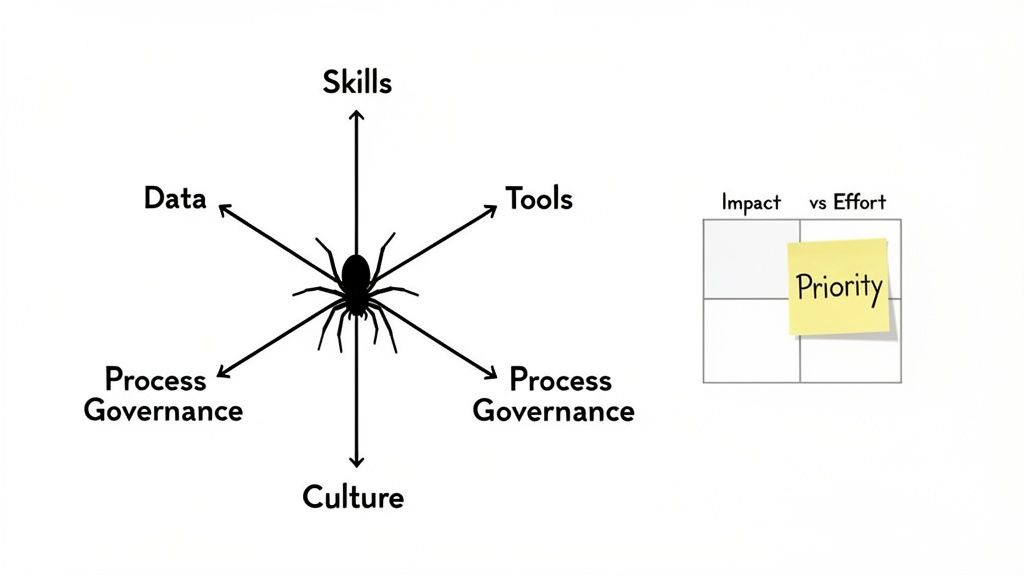

Spreadsheets full of numbers are hard for anyone to get excited about. One of the most powerful ways to communicate these findings is with a spider chart (also called a radar chart). It gives everyone an at-a-glance snapshot of your team’s strengths and weaknesses.

This kind of visual makes imbalances jump off the page. Maybe your chart shows a high score in Tools but a dangerously low one in Data Infrastructure. Right away, it’s clear you have the fancy software but lack the quality data to make it do anything useful. That kind of clarity is what gets you the buy-in you need for your recommendations.

Moving From Gaps to Priorities

Once you’ve spotted the gaps, you need to turn them into strategic initiatives. But not all gaps are created equal. Your job is to figure out which ones will give you the most bang for your buck. A common issue that emerges is information silos. For many teams, the assessment reveals their biggest hurdle isn't a lack of tools but the simple inability to find and use the information they already have. In those situations, looking into AI-powered knowledge management can be a game-changer.

A Simple Framework for Prioritizing Your Next Moves

I always recommend using a simple Impact vs. Effort matrix to sort through your potential projects. It’s a straightforward way to categorize initiatives and focus your team’s energy where it matters most. Just plot each potential project on a four-quadrant grid:

- Quick Wins (High Impact, Low Effort): Jump on these first. They deliver immediate, visible value and build crucial momentum. Practical Example: Running a series of prompt engineering workshops for the marketing team—a small investment that can immediately boost their content creation.

- Major Projects (High Impact, High Effort): These are the big, foundational initiatives you need for long-term success, but they require serious planning and resources. Practical Example: A complete overhaul of your CRM data hygiene or developing a formal AI governance policy from scratch.

- Fill-ins (Low Impact, Low Effort): These are the "nice-to-haves." You can tackle them when you have downtime, but don't let them distract from the bigger fish. Practical Example: Creating a shared library of company-approved AI tools.

- Reconsider (Low Impact, High Effort): Stay away from these. They burn through time, money, and morale for very little return. Practical Example: Building a custom, in-house AI tool when a perfectly good third-party solution already exists.

By sorting every initiative this way, you transform a long, overwhelming list of problems into a sequenced, strategic roadmap.

Translating Your Roadmap into a Winning AI Pilot

A strategic roadmap is just a document until you put it into action. Once you've completed your AI Readiness Assessment for Teams, the real work begins: turning your prioritized ideas into a real-world project. This is your chance to move from planning to proving, selecting an initial pilot that builds momentum and gets the buy-in you need for bigger things down the road. A successful first pilot is your best internal marketing tool.

Criteria for Selecting the Perfect Pilot

Choosing your first project is a big decision. You're looking for that sweet spot between visibility, impact, and feasibility. Go too big, and you risk falling flat. Go too small, and no one will care enough to fund the next one.

- Clear Business Impact: Does this solve a real, nagging problem? The result needs to tie directly to a metric that matters.

- High Feasibility: Can you get this done with the team and tech you have (or can easily get)?

- Measurable Success: Can you define what a "win" looks like in hard numbers? Vague goals won't cut it.

- Visible and Valued: Will the right people see and appreciate the results?

Defining What Success Looks like (With a Practical Example)

Before you begin, everyone needs to agree on the success metrics. They should be specific, measurable, achievable, relevant, and time-bound (SMART).

Practical Example: A Content Personalization Engine

Imagine your marketing team wants to pilot an AI tool to personalize website content for different visitors.

- Metric 1: Boost conversion rates on key landing pages by 15% within 90 days.

- Metric 2: Drop the bounce rate for new visitors by 20% in the same timeframe.

- Metric 3: Increase the average time on page by 30 seconds for your target audience.

These metrics leave no room for debate. After 90 days, the team will know if the pilot worked.

Assembling Your Pilot Team and Feedback Loop

Your pilot team should be a small, cross-functional group of people who are both capable and genuinely excited about the project. You need the right team: a project lead, a subject matter expert, and someone with the tech chops to make it happen.

Don't forget to factor in the talent market. Data from JobsPikr’s AI Readiness Index found that AI professionals earn about a 32% salary premium over similar non-AI roles, with hiring delays averaging 77 days. This is a huge signal to lean on and upskill your current team for these initial pilots whenever you can.

Once your team is set, get a tight feedback loop going:

- Daily Check-ins: A quick 15-minute huddle to cover progress and roadblocks.

- Weekly Reviews: A more formal check-in with stakeholders to track against your metrics.

- End-User Feedback: Get regular input from the people actually using the new tool or process.

Proving ROI with another Real-World Example

Let's look at another classic pilot: an AI-powered lead scoring system for a B2B sales team. This is a great starting point because it hits revenue directly. A solid readiness assessment will give you the confidence to launch something like this. You can see how this fits into a broader strategy in our guide to AI-powered lead generation strategies.

- The Problem: Sales reps are chasing too many dead-end leads.

- The Pilot: Roll out an AI model that scores leads based on firmographic data, user engagement, and past conversion history.

- The Metrics: Increase the lead-to-opportunity conversion rate by 25% and shorten the sales cycle by 10% in one quarter.

- The Outcome: By focusing on the high-scoring leads, the sales team lifts its conversion rate to 30%, easily proving the pilot's ROI and getting the green light for a full rollout.

Your Top AI Readiness Questions Answered

When you're about to kick off an AI readiness assessment, a lot of practical questions pop up. Leaders want to know what they're getting into, what roadblocks to expect, and what the whole process actually looks like. Let's tackle the most common ones.

How Long Does This Actually Take?

For a single team or a focused department, you can usually get a solid assessment done in about two to four weeks. That timeframe covers everything from the initial planning and stakeholder interviews to data analysis and presenting your final roadmap. The goal is to keep things moving and ensure the findings are still relevant when you get them.

How Do I Get Leadership and Other Teams on Board?

Simple: talk about business outcomes, not technology. Frame the assessment around what it will achieve for the company. Drop the tech jargon and focus on results.

Impact Opportunity: Instead of saying, "We need to assess our tech stack for AI compatibility," say, "Let's figure out how we can use AI to boost our qualified lead conversion by 15% this year." You've just shifted the conversation from a cost (new tech) to a value-add (more revenue). This frames the assessment as a strategic move to unlock growth, improve efficiency, and get a leg up on the competition.

What’s the Biggest Mistake Teams Make?

Hands down, the most common mistake is obsessing over technology and data while completely ignoring the people and processes. You can have the slickest AI tools and the cleanest data, but if the human side of the equation is broken, the whole thing is destined to fail. If your team isn't trained, if your workflows are outdated, and if your company culture punishes experimentation, that shiny new AI tool is just going to collect dust.

We Did the Assessment... Now What?

Your first move is to share what you learned with key stakeholders and get everyone to agree on one high-impact, low-risk pilot project. Don't try to fix everything at once. Score a quick, visible win. A successful first pilot proves the value of this whole approach and builds the momentum you need for bigger things. That initial proof of concept makes it much easier to get the resources and excitement you need for more ambitious AI projects down the road.

Ready to move from theory to action? Prometheus Agency helps growth leaders conduct a thorough AI readiness assessment and build a practical roadmap for success. Get your complimentary AI Quotient score and see where you stand today. Find out your AI Quotient for free.