Scaling AI isn't about chasing a "wow" moment in the boardroom. From my experience talking with leaders every day, it's about building integrated, resilient systems that solve clear business problems and deliver real value.

Success hinges on picking the right pilot from the start—one designed for scalability, tied to measurable ROI, and backed by a solid plan for data, infrastructure, and user adoption. I always say it's less about the AI itself and more about the strategic groundwork you lay before a single line of code is written. Thinking about which pilots are truly appropriate to move into production is the conversation that separates success from failure.

Key Takeaways

- Start with ROI, Not Tech: The most successful AI projects begin by identifying a specific, high-value business problem with a measurable financial impact, not with a flashy technology.

- Data is the Foundation: A pilot can succeed with clean, curated data, but production requires strong, automated data pipelines. 60-80% of the effort in any serious AI project is dedicated to data preparation.

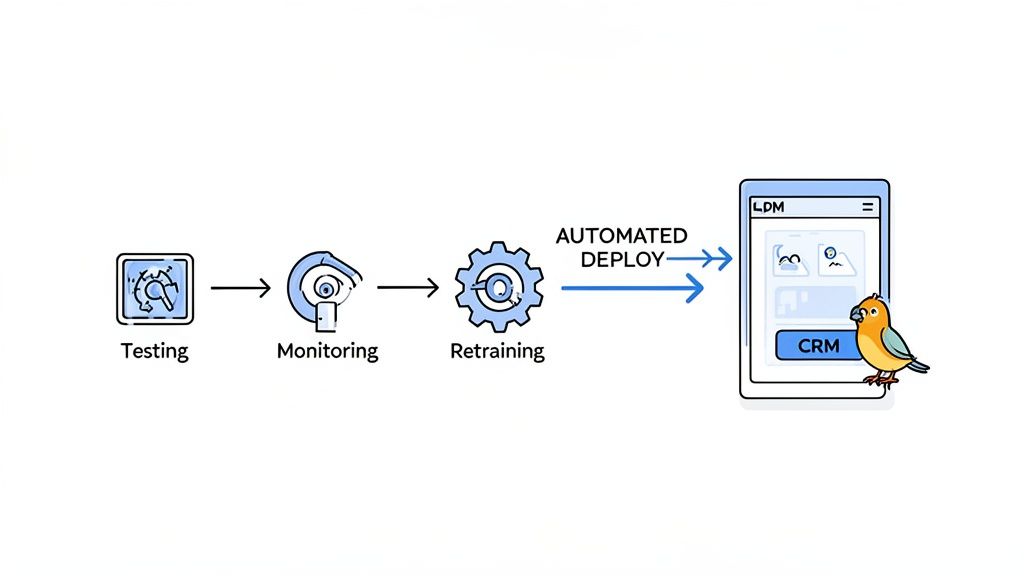

- MLOps is Non-Negotiable: To move from a manual pilot to an automated production system, you must implement Machine Learning Operations (MLOps) for continuous monitoring, retraining, and deployment.

- Adoption is a Human Challenge: The biggest barrier to scaling is human adoption. Success depends on a people-first change management plan that focuses on how the AI makes employees' jobs easier.

- Integrate, Don't Isolate: AI tools must be seamlessly embedded into existing workflows (like your CRM or ERP) to be effective. If it requires users to switch contexts, it will fail.

Why Most AI Pilots End Up in Purgatory

I've seen it happen more times than I can count. A brilliant AI pilot gets a standing ovation, but it never actually makes its way into the daily workflow. The initial buzz dies down, the budget evaporates, and the project gets stuck in what I call 'pilot purgatory.'

It becomes a cool tech demo gathering dust on a shelf, a cautionary tale whispered in hallways.

The issue is almost never the technology. Modern AI models are incredibly powerful. The failure is almost always in the strategy—or the complete lack of one. Too many leaders get mesmerized by flashy tech without asking the most fundamental question: "What specific, measurable business problem are we solving?"

This oversight is the single biggest reason promising AI projects stall out.

The Allure of the Impressive Demo

It’s surprisingly easy to create an AI pilot that looks amazing in a controlled environment. Use a clean, perfectly curated dataset, and you can showcase a model’s potential, creating that 'wow' moment for stakeholders.

But the real world is messy.

Production data is flawed, user behavior is unpredictable, and your existing systems have their own quirks. A pilot built only for a demo simply isn't engineered to handle these realities. The goal isn’t a round of applause; it's tangible business growth. That means shifting your entire focus from "Can we build this?" to "Should we build this, and how will it actually integrate and scale?"

The success of an AI initiative isn't measured by how cool the pilot is. It's measured by its successful transition into a production system that delivers ongoing, measurable business value. Without a clear path to production, a pilot is just an expensive science fair project.

Confronting the Sobering Statistics

This isn't just my opinion; the data paints a stark picture. According to a recent MIT report, a staggering 95% of generative AI pilots fail to deliver measurable P&L impact within six months.

Impact Opportunity

The 5% of companies that succeed don't just get lucky; they are ruthlessly strategic. By focusing on a single, high-impact business problem and building a clear path to production from day one, they capture an enormous competitive advantage. Escaping 'pilot purgatory' means moving from scattered experiments to integrated, value-driving systems that directly boost revenue or cut costs.

Why? The failure rate is often tied to a rush to plug in new models without establishing the operational fundamentals first. You can dig into the full MIT NANDA Report analysis on Fortune to see the breakdown.

This statistic reveals a massive disconnect. Organizations are eager to adopt AI, but many are unprepared for the strategic, cultural, and operational shifts required for scaling AI from pilot to production. They completely underestimate the deep integration work and change management needed to turn a promising concept into a revenue-generating system.

To help you sidestep these common traps, here's a quick look at the most frequent obstacles and the mindset shift needed to overcome them.

Common AI Scaling Pitfalls and Strategic Solutions

| Common Pitfall | Strategic Solution |

|---|---|

| Solving a Non-Problem | Focus on a high-value, specific business pain point. If it doesn't solve a real problem, it's a vanity project. |

| "Demo-First" Mindset | Build for reality from day one. Assume messy data, user error, and integration challenges. |

| No Clear Owner | Assign a single, enabled executive sponsor who is accountable for the business outcome, not just the tech. |

| Ignoring the End-User | Involve the people who will actually use the system early and often. Their adoption determines success. |

| Treating Data as an Afterthought | Make data strategy the first step. Data quality, access, and governance are non-negotiable prerequisites. |

| Neglecting Change Management | Plan for training, communication, and workflow adjustments. The tech is the easy part; changing human behavior is hard. |

These pitfalls aren't just technical hurdles; they're fundamental strategic blunders. Avoiding them requires a deliberate, disciplined approach that prioritizes business outcomes over technological novelty.

This guide is designed to bridge that gap. We're going to move beyond the hype and give you a practical, no-nonsense roadmap to make sure your AI investment translates into measurable growth—not just another project lost in purgatory.

Building a Rock-Solid Foundation Before You Build

Before anyone writes a single line of code for a production model, the real work has to be done. From my experience, this is the phase where AI projects are secretly won or lost. It has nothing to do with algorithms or infrastructure just yet. It's all about strategic clarity and human alignment—the two things that determine whether your pilot has a fighting chance of ever reaching production.

The temptation is to dive right into the tech. But the most successful projects I’ve been a part of always started with rigorous, non-technical planning. This is where you trade vague corporate goals for ruthlessly specific, ROI-driven objectives. Without that clarity, your team is just flying blind, and your pilot is destined to become a footnote instead of a core business asset.

Define Success with Unforgiving Clarity

"Improve efficiency" is not a goal; it's a wish. The real work of scaling AI from pilot to production starts by defining what success looks like in concrete, measurable terms. You need a target the finance department can understand and the sales team can actually get excited about.

You have to think in terms of tangible business outcomes. For example, instead of a goal to "optimize lead scoring," the objective should be to "increase the marketing-qualified-lead to sales-accepted-lead conversion rate by 20% within six months." Now that's a goal you can build a business case around, measure against, and hold people accountable for.

Practical Examples

Instead of: "Automate customer support."

Try: "Reduce average ticket resolution time for Tier-1 inquiries by 40% by deploying an AI chatbot that handles the top five most common questions."

Instead of: "Enhance sales forecasting."

Try: "Improve forecast accuracy to within a 5% margin of error on a quarterly basis, reducing excess inventory costs by $500,000 annually."

This level of specificity forces difficult but necessary conversations early on. It makes sure the problem you're solving is one the business genuinely cares about—and one that justifies the investment for a full-scale rollout.

Assembling Your Cross-Functional Governance Team

An AI project can't live in an IT silo. One of the most critical steps I've seen leaders take is building a cross-functional governance team right from the get-go. This isn't just another technical steering committee; it's a coalition of business leaders who have a direct stake in the project's outcome.

Your team absolutely must include representatives from key departments to ensure total alignment and head off challenges from every angle.

Practical Example: A Manufacturing Client

I recently worked with a mid-market manufacturing company that wanted to use AI to sharpen its go-to-market motion. Instead of just talking to the IT and sales VPs, we pulled together a team that included:

- Head of Operations: To map the entire production-to-delivery workflow and pinpoint data sources and bottlenecks.

- CFO: To model the financial impact of reducing lead-to-quote time and validate the projected ROI.

- Sales Manager: To provide the on-the-ground truth about what the sales team actually needed to close deals faster.

- IT Lead: To assess the feasibility of integrating a new AI tool with their legacy ERP and CRM systems.

Getting these different perspectives in one room allowed us to move past generic ideas quickly. We mapped their entire GTM process and zeroed in on the single biggest point of friction: the time it took to generate a custom quote for a complex order.

That became our pilot's focus. The objective was crystal clear: "Reduce average quote generation time from 3 days to 4 hours."

By defining a specific, high-impact business problem with cross-functional buy-in, we designed a pilot that wasn't just a technical exercise. It was engineered from day one to solve a real-world constraint, making its path to production a logical and justifiable next step.

This foundational work is non-negotiable. Taking the time to build this strategic and human infrastructure is what separates the projects that succeed from those that stall. A key part of this is understanding your organization's readiness, which is why assessing your team's overall AI Quotient can provide valuable insights into your strengths and weaknesses before you commit serious resources.

Mastering Your Data and Infrastructure for Scale

An AI model is only as good as the data it’s fed and the infrastructure that supports it. I talk about this all the time because it’s the unglamorous, behind-the-scenes work that truly separates successful AI projects from the ones that crumble under pressure.

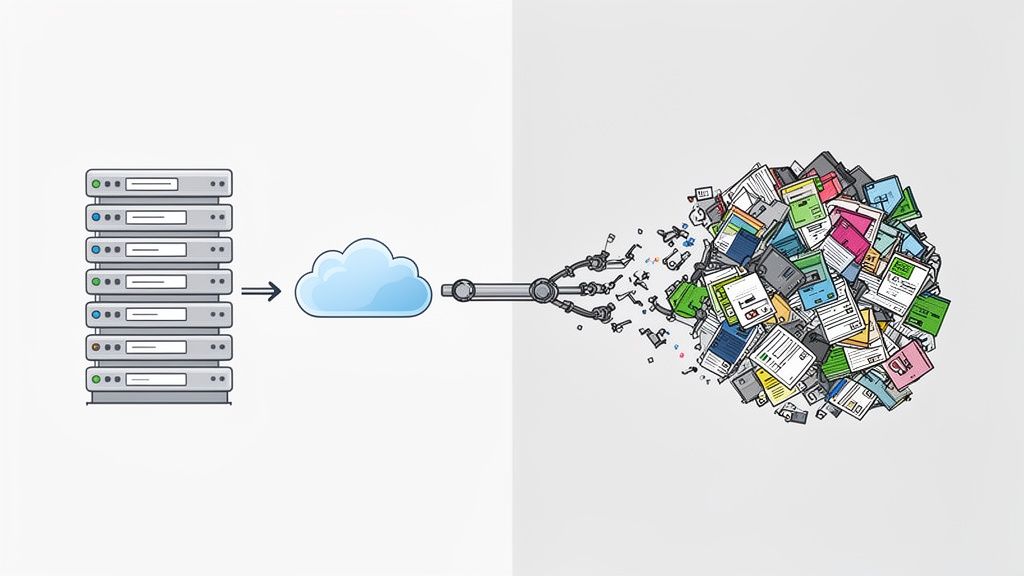

A pilot can get by on a clean, hand-picked dataset. But production is an entirely different beast—it’s messy, unpredictable, and relentless.

This is where we have to tackle the non-negotiable task of getting your data and infrastructure ready for the real world. Many leaders get swept up in the model's potential and push data prep to the side. In my experience, that’s a recipe for disaster.

The reality is that 60-80% of the total effort in any serious AI project goes into data preparation. Skimping here is a direct cause of failure. In fact, for 33% of unsatisfied firms, their tech worked fine in the pilot but crumbled at scale precisely because they overlooked these data demands.

Conducting a Brutally Honest Data Readiness Assessment

Before you even think about scaling, you need a crystal-clear picture of your data market. This means a thorough, no-holds-barred data readiness assessment. It’s not about just having data; it's about having the right data, and making sure it’s accessible, clean, and reliable.

Start by asking the tough questions:

- Data Availability: Do we actually have enough historical data to train the model effectively? Is it locked away somewhere our systems can't even reach?

- Data Quality: How clean is this stuff, really? Are we talking about missing values, duplicates, or just plain old inconsistencies?

- Data Relevance: Does this data truly reflect the real-world problems we're trying to solve in production?

- Data Governance: Who owns this data? What privacy and compliance rules do we absolutely have to follow?

Treating this assessment as a simple checkbox exercise is a huge mistake. It needs to be a deep-dive investigation that uncovers every single flaw and gap in your data ecosystem.

Building strong and Automated Data Pipelines

A pilot might run on a static CSV file you pull once. A production system, on the other hand, needs a constant, reliable flow of information. That requires building strong data pipelines that can automatically ingest, clean, transform, and feed data to your model without skipping a beat.

This is where many organizations get stuck. They completely underestimate the engineering work it takes to go from a manual data pull to an automated, resilient pipeline. Your goal is to create a system that can handle the chaos of production data—API failures, format changes, and unexpected surges in volume—without needing constant human babysitting.

A production-grade AI system isn't just a model; it's an integrated machine where the data pipeline is just as critical as the algorithm. If the pipeline breaks, the model is flying blind.

Understanding how to maintain and improve your underlying data is paramount for any AI model operating at scale. You can explore practical strategies for improving data quality to support business growth.

Choosing the Right Infrastructure for the Job

Your infrastructure choice—cloud, on-premises, or a hybrid approach—carries massive implications for your costs, scalability, and maintenance headaches. There's no single right answer here. The best choice depends entirely on your specific needs for scaling AI from pilot to production.

Here's a quick rundown to help guide your thinking:

- Cloud (AWS, Azure, GCP): Offers incredible scalability and flexibility. It's perfect for workloads with unpredictable demand, since you only pay for what you use. The catch? Costs can spiral out of control if you're not watching them carefully.

- On-Premises: Gives you maximum control over data security and compliance, which is a must-have for highly regulated industries. The downside is the huge upfront investment and the ongoing burden of maintaining it all yourself.

- Hybrid: Blends both worlds. You can keep sensitive data safe on-prem while using the cloud's muscle for heavy computation and scaling. It offers great flexibility but definitely adds complexity to management and integration.

Let your decision be driven by real factors: security requirements, your team's existing technical skills, your budget, and how much you expect usage to fluctuate. Don't just follow a "cloud-first" mantra without a clear-eyed analysis of the trade-offs.

Implementing MLOps and Seamless System Integration

This is where the rubber meets the road—where your polished AI model has to survive in the chaotic, real-world environment of your business. It’s one thing to build a model that looks great in a controlled lab setting; it's a whole different ballgame to make it a living, breathing part of your day-to-day operations.

I see this all the time. Companies get stuck because they try to manage a production-scale model with the same manual, one-off approach they used for the pilot. That just doesn't work. To truly scale, you need a systematic, automated framework. That framework is MLOps (Machine Learning Operations).

For a business leader, MLOps isn't just technical jargon. Think of it as the assembly line for your AI. It automates deployment, keeps a constant eye on performance to catch any degradation, and automatically triggers retraining when the model starts to drift. It’s what makes scaling AI from pilot to production a reality, not just a goal.

Choosing Your Deployment Strategy

Once you’ve adopted an MLOps mindset, the next big question is how to get the model out into the wild. You don’t just flip a switch and hope for the best. A gradual, strategic rollout is crucial to minimize risk and gather real-world performance data before you go all-in.

Your choice of deployment pattern really depends on your risk tolerance and the specific use case. A customer-facing recommendation engine might call for a cautious canary release, while an internal forecasting tool is a great candidate for a low-risk shadow deployment.

To help you decide, here's a quick breakdown of the most common approaches.

AI Deployment Patterns at a Glance

| Deployment Pattern | Best For | Key Consideration |

|---|---|---|

| Shadow Deployment | Low-risk environments where you want to compare the new model's performance against the existing system without impacting users. | Great for validation, but you won't get direct user feedback since the model's output isn't live. |

| Canary Release | Rolling out a new feature to a small group of users (e.g., 5% of your sales team) to gather feedback and monitor impact in a controlled setting. | Perfect for minimizing blast radius. Allows for a quick rollback if issues arise with the initial user group. |

| Blue-Green Deployment | Mission-critical applications where downtime is not an option. You can switch all traffic to the new version instantly and revert just as fast. | Requires double the infrastructure, which can be costly. But the near-zero downtime is a massive advantage. |

Choosing the right pattern is a key part of de-risking your AI launch. To make sure your initiatives move forward without a hitch, it's also worth having your engineering leads review the top MLOps best practices.

Embedding AI Where Your Team Lives

Here’s a hard truth: the most brilliant model in the world is completely useless if it’s not woven into the tools your team uses every single day. Successful AI is embedded, not bolted on. That means pushing insights directly into your CRM, ERP, or whatever core platform your people live in.

The goal is to make the AI invisible. It shouldn't feel like another tool to log into; it should feel like the system just got smarter. This seamless integration is the true mark of a production-ready AI solution.

This is where you unlock the real value. For B2B growth leaders, especially in manufacturing or other middle-market companies, this demands a partner who gets deep system integration. This is our bread and butter, and it's how we’ve helped clients achieve an average of 58% in manual-effort reductions across hundreds of projects.

Practical Example: An In-CRM Lookup Tool

I remember working with a national pest-control brand whose sales team was getting absolutely bogged down. To quote a service, they had to jump out of their CRM, cross-reference multiple complex pricing sheets, and then manually calculate everything. It was a huge time sink.

We didn’t build them some fancy, standalone calculator. Instead, we built an AI-powered lookup tool right inside their CRM. Now, a salesperson can enter a customer's address and service needs, click a button, and get an accurate, compliant quote generated instantly within the customer's record.

This wasn't just a new feature; it was a complete workflow transformation.

- Before: A clunky, 15-minute process bouncing between systems and spreadsheets.

- After: A lightning-fast, 30-second process, all done without ever leaving the CRM.

The impact was immediate. They saw a 69% faster lead-to-appointment time. The project was a home run because we focused on integrating the AI at the precise point of need, making the sales team faster and far more effective. This is what a successful jump from pilot to production looks like—and getting this phase right is a cornerstone of our AI enablement services.

Driving Adoption Through People-First Change Management

You can build the most technically brilliant AI system in the world, but if your team doesn’t actually use it, you’ve just built a very expensive monument to wasted effort. I’ve seen this happen more times than I can count.

This final, critical phase of scaling AI from pilot to production has almost nothing to do with technology and everything to do with people. My playbook for this stage isn’t about fancy features; it’s about making your team’s jobs genuinely better. Without a thoughtful, deliberate change management plan that puts the user at the absolute center, even the most promising AI becomes shelfware.

Shifting from Mandate to Motivation

The biggest mistake leaders make is treating AI adoption as a top-down mandate. They roll out a new tool, send a memo, and just expect people to get on board. This approach is a fast-track to failure because it completely ignores the one question on every employee's mind: "What's in it for me?"

Real adoption isn't about forcing compliance. It's about creating genuine motivation. To get there, you have to stop talking about features and start talking about the tangible benefits that solve your team's daily headaches.

Practical Examples

- For your sales team: It’s not about "using the new AI lead scorer." It's about "spending less time chasing dead-end leads and more time talking to prospects who are actually ready to buy."

- For your operations team: It’s not about "adopting an automated forecasting tool." It's about "getting back the three hours you spend buried in spreadsheets every Friday afternoon."

When you frame the change in terms of problems solved and time saved, you create pull, not push. People don't adopt technology; they adopt better ways of working.

You cannot simply announce a new AI tool and expect people to line up. You have to sell the vision, not the software. The most successful rollouts feel less like a corporate decree and more like a shared mission to solve a painful, long-standing problem.

enabling Managers as Your Frontline Champions

A central AI team or the IT department can't drive adoption alone. They’re too far removed from the day-to-day grind. The real champions of change are your line managers—the people who live in the workflows and have the trust of their teams.

I always coach leaders to enable these managers to lead the charge. They are the only ones who can translate the AI's high-level benefits into the practical, on-the-ground reality for their people. When a sales manager, not an AI specialist, explains how a new system will help their reps hit quota, the message lands with far more weight.

Equip them to be effective evangelists:

- Simple Talking Points: Give them clear, concise language to explain the "why" behind the change.

- Targeted Training: Help them lead hands-on sessions that are specific to their team's actual work.

- Feedback Channels: Make them the primary point of contact for gathering feedback and crushing roadblocks.

This distributed ownership model is light-years more effective than a top-down approach. When managers are invested, their teams follow. It's a crucial element for leaders growing differently with AI. For a deeper look, check out our guide on how AI-enabled leaders are taming their tech stack to drive real results.

Creating a Human-Centric Rollout Plan

A winning adoption strategy needs a structured, people-first game plan. It boils down to great communication, user-focused training, and solid feedback loops.

1. Communicate Early and Often

Start talking about the "why" long before the tool ever goes live. Build some excitement and tackle the fears head-on. Reassure everyone that the goal is to augment their skills, not to replace them.

2. Develop User-Centric Training

Forget generic, one-size-fits-all webinars. Design role-specific training that shows users exactly how the AI tool fits into their current workflow to make their specific tasks easier and faster.

3. Establish Clear Feedback Loops

Create simple, accessible channels for people to report bugs, ask questions, and suggest improvements. Acting on this feedback quickly is non-negotiable—it shows your team their input matters and that the system is actually evolving to meet their needs.

Impact Opportunity

The data backs this up. Partnerships with specialized AI vendors often see a 67% success rate, a huge jump from the 33% for riskier internal builds. Why? Because experienced partners know to focus on back-office wins that make employees’ lives easier, instead of chasing flashy marketing gimmicks with little operational ROI. By focusing on making people’s jobs better, you create a powerful engine for adoption that technology alone can never achieve.

Your Path From AI Potential to Business Performance

Scaling AI from a promising pilot to a full-blown production system is a journey. It’s a strategic blend of technology, process, and—most importantly—people. We've walked through the whole roadmap, from setting a rock-solid ROI foundation to driving adoption with smart change management.

But here’s the real takeaway: success isn't about having the fanciest model. It’s about having the most integrated and widely used system that solves an actual business problem.

The massive gap between the 5% of companies that succeed and the 95% that get stuck in pilot purgatory comes down to a relentless focus on business outcomes. Startups get this right. I’ve seen young teams rocket from zero to $20 million in revenue in a year by zeroing in on a single pain point and executing flawlessly. Enterprises, on the other hand, often scatter their resources across a dozen pilots, which just dilutes the impact. Fortune's analysis breaks this down pretty well.

Turning Adoption into Performance

The path from a technical rollout to a genuine business asset is all about the human element. This isn't just a flip-the-switch moment.

This flow shows that training, gathering feedback, and enabling your champions aren't one-off tasks. They form a continuous cycle that builds momentum and ensures the technology actually sticks.

As a leader, your job is to champion this vision, ask the tough questions, and build the organizational muscle needed to turn AI potential into real, lasting performance. Keep your eyes on the business impact, not just the tech itself.

Common Questions on Scaling AI

As I work with leaders on taking AI from a promising pilot to a core business driver, the same questions always surface. These aren't just technical queries; they're the big, strategic hurdles that determine whether an AI initiative generates real value or just fizzles out.

Here are my straight-up answers to the questions I hear most often.

What’s the Single Biggest Mistake When Scaling an AI Pilot?

The most common and costly mistake is getting the "last mile" wrong. It’s a classic trap: leaders pour 90% of their effort into perfecting the model and only 10% into how their people will actually use it.

This is all about the integration and change management needed to truly weave the AI into daily workflows. A technically brilliant model that’s clunky, hard to access, or disrupts how people work is doomed from the start. It’ll die a slow death from low adoption.

Success means treating user experience, training, and seamless system integration as day-one priorities, not afterthoughts.

How Do You Calculate ROI for an AI Project Before It’s Fully Scaled?

You have to bake ROI calculations into the pilot design phase. Forget broad goals like "improving efficiency" and get specific with KPIs your finance team can actually work with.

Practical Examples

- For a sales AI, this could be "increase in qualified leads per representative."

- For an operational AI, it might be "decrease in manual data entry hours."

Then, you model the financial impact by assigning a real dollar value to those metrics. This process turns a cool tech idea into a concrete business case, projecting ROI based on hitting specific pilot targets. It makes getting the green light for a full rollout a much easier conversation.

The most convincing business cases are never built on vague promises. They’re built on a clear, quantifiable line connecting the AI’s function directly to a P&L item.

Should We Build Our Own AI or Partner with a Vendor?

From what I’ve seen across the board, most middle-market companies find partnering with a specialized AI firm is the faster, more reliable path. Building a solution from scratch is a massive undertaking that demands incredibly rare and expensive talent.

The data backs this up—partnerships just tend to succeed more often than internal builds. A good partner brings more than just tech skills; they bring strategic experience from hundreds of other projects. They’ve already seen the common pitfalls and know how to avoid them.

This lets your team stay focused on running the business while using expert guidance to ensure the AI solution actually moves the needle.

Ready to turn your AI potential into measurable business performance? The team at Prometheus Agency specializes in guiding leaders through every stage of AI enablement, from ROI-driven pilots to full-scale production. Schedule your complimentary Growth Audit and AI strategy session to build a clear roadmap for success. Learn more at prometheusagency.co.