When people talk about AI Ethics and Compliance Consulting, what they really mean is getting the expert guidance needed to build and run AI responsibly. It’s about turning abstract ideas like fairness and transparency into concrete actions, so you can innovate without stumbling into legal, financial, or brand-destroying disasters.

Key Takeaways

- Proactive Governance is a Must: AI ethics is no longer a theoretical debate; it's a practical requirement for sustainable growth, risk mitigation, and building customer trust.

- From Cost to Advantage: Viewing compliance as just an expense is a mistake. A strong ethical framework is a competitive differentiator that attracts enterprise clients and top talent.

- Action is Required: Building a responsible AI program involves tangible steps: creating a cross-functional team, inventorying all AI systems, defining success metrics, and engaging expert partners for a formal risk assessment.

- Partner Selection is Crucial: The right consulting partner focuses on business outcomes, has hands-on technical experience, and aims to make your team self-sufficient.

Moving Beyond AI Hype to Real-World Governance

For B2B leaders, "responsible AI" has officially left the chatroom and entered the boardroom. It's no longer a nice-to-have virtue signal; it's the bedrock of customer trust, scalability, and long-term market advantage. The real challenge is closing the gap between high-level ethical goals and the nitty-gritty of daily operations. That's where expert help becomes essential.

An AI ethics and compliance consultant helps you build the guardrails that keep your AI ambitions from flying off the rails. Proactive governance isn't about pumping the brakes on innovation—it's about making sure you can hit the accelerator safely.

Why Proactive Governance Matters

Think of a good governance framework as a decision-making engine. It gives you clear answers to tricky questions before they blow up into full-blown crises, allowing your organization to move faster and with more confidence.

- Trust Becomes Your Differentiator: In a crowded market, being able to prove your AI is ethical is a massive selling point that attracts savvy enterprise clients.

- You Sidestep Major Risks: It helps you avoid the catastrophic brand damage, regulatory fines, and legal battles that pop up when biased or non-compliant AI goes wrong.

- You Can Scale Sustainably: Building ethics into your AI from day one is worlds more efficient than trying to bolt on compliance after you’ve already scaled.

Key Takeaway: Getting AI ethics right isn’t just a compliance checkbox. It’s a core competency for any executive serious about using AI for sustainable growth, turning a potential liability into a strategic asset.

Practical Example: Biased Lead Scoring

Imagine a B2B SaaS company using an AI model to score inbound sales leads. Without a solid ethical framework, that model could easily learn biases from old data. It might start deprioritizing leads from smaller companies or certain industries, causing the business to unknowingly bleed valuable opportunities. By bringing in an AI ethics consultant, the company could implement a governance plan. They would audit the training data for bias and set up a "human-in-the-loop" review process for any leads that scored suspiciously low. This single move prevents a potential data crisis and keeps the sales pipeline fair and effective. A tool like the supportGPT AI application would absolutely require this kind of strong governance to function properly in the real world.

Impact Opportunity

The bottom line is clear: leaders who get proactive about AI governance aren’t just protecting their companies from massive downside risks—they’re unlocking a huge amount of upside potential. This foresight builds a more resilient, trusted, and ultimately more profitable business that's ready for whatever technology throws at it next.

Why Ethical AI Is Now a Boardroom Imperative

The conversation around AI ethics isn't just for IT departments or university labs anymore. It has officially arrived in the boardroom, quickly becoming a core part of corporate strategy and risk management. For any leader today, ignoring AI ethics is like ignoring financial regulations after the 2008 crisis—a gamble you simply can’t afford to take.

This isn’t happening in a vacuum. The shift is being driven by real-world, high-stakes forces, starting with a tidal wave of global regulations. Laws like the EU AI Act are creating a new global standard, slapping strict rules on AI systems based on how much risk they pose to people.

And non-compliance isn't just a slap on the wrist. We're talking about massive financial penalties, with fines that can soar as high as €35 million or 7% of global revenue. Suddenly, ethical AI goes from a nice-to-have philosophical idea to a hard-and-fast financial reality.

The Rising Tide of Stakeholder Demands

It's not just the regulators, either. Customers and investors are getting louder, demanding more transparency and accountability from the companies they do business with. In the B2B world, showing a real commitment to responsible AI is becoming a powerful way to stand out. Your enterprise clients are now looking at their own supply chain risks, which means they're putting your company’s ethical posture under a microscope.

The business case is crystal clear: companies that can prove their AI is fair, transparent, and secure are going to win more deals. The ones who can't will struggle to build the trust needed for any real, long-term partnership. The market for responsible AI is exploding, signaling a massive change in business priorities. In fact, it's projected to grow from $910.4 million in 2024 to an incredible $47,159 million by 2034.

Practical Example: Brand Risk Scenario

Imagine a bank uses an AI algorithm to approve loans. If the data it was trained on is full of historical biases, the model could end up automatically rejecting qualified applicants from certain backgrounds. The fallout? Not only crippling regulatory fines but also the kind of brand damage and public distrust that could take a decade to fix. This is exactly why AI ethics and compliance consulting is so critical—it helps you uncover and neutralize these hidden risks before they blow up.

From Cost Center to Competitive Advantage

It’s a huge mistake to see AI governance as just another line item on the expense report. Think of it as an investment in your company’s resilience and brand value. A solid ethical framework does more than just keep you out of legal trouble; it builds a foundation of trust that actually helps you grow faster.

Key Takeaway: Viewing AI ethics as merely a compliance hurdle is shortsighted. Leaders who champion it as a core business principle build stronger, more trusted brands that attract top talent and loyal customers.

Impact Opportunity

Leaders who champion ethical AI aren't just managing risk—they're building stronger, more trusted, and more profitable companies. A strong ethical stance attracts the best talent, locks in loyal customers, and cements your reputation as a forward-thinking industry leader. As you build a smarter organization, it's crucial to see how AI-enabled leaders are growing differently by baking these principles right into their core strategy. Getting ahead of AI ethics today ensures your company isn't just compliant—it's competitive and ready for whatever comes next.

The Building Blocks of an AI Governance Framework

Moving from abstract principles to on-the-ground action means building a structured AI governance framework. Don't think of this as a restrictive rulebook. It's more like an architectural blueprint for responsible, high-performing AI systems. It’s what translates the "why" of ethical AI into the "what" and "how" of your daily operations, making sure your tech actually aligns with your business goals.

Without this structure, even the best-intentioned AI projects can wander into risky territory, creating vulnerabilities that were entirely avoidable. A solid framework stands on several key pillars, each one designed to manage a specific kind of risk and responsibility.

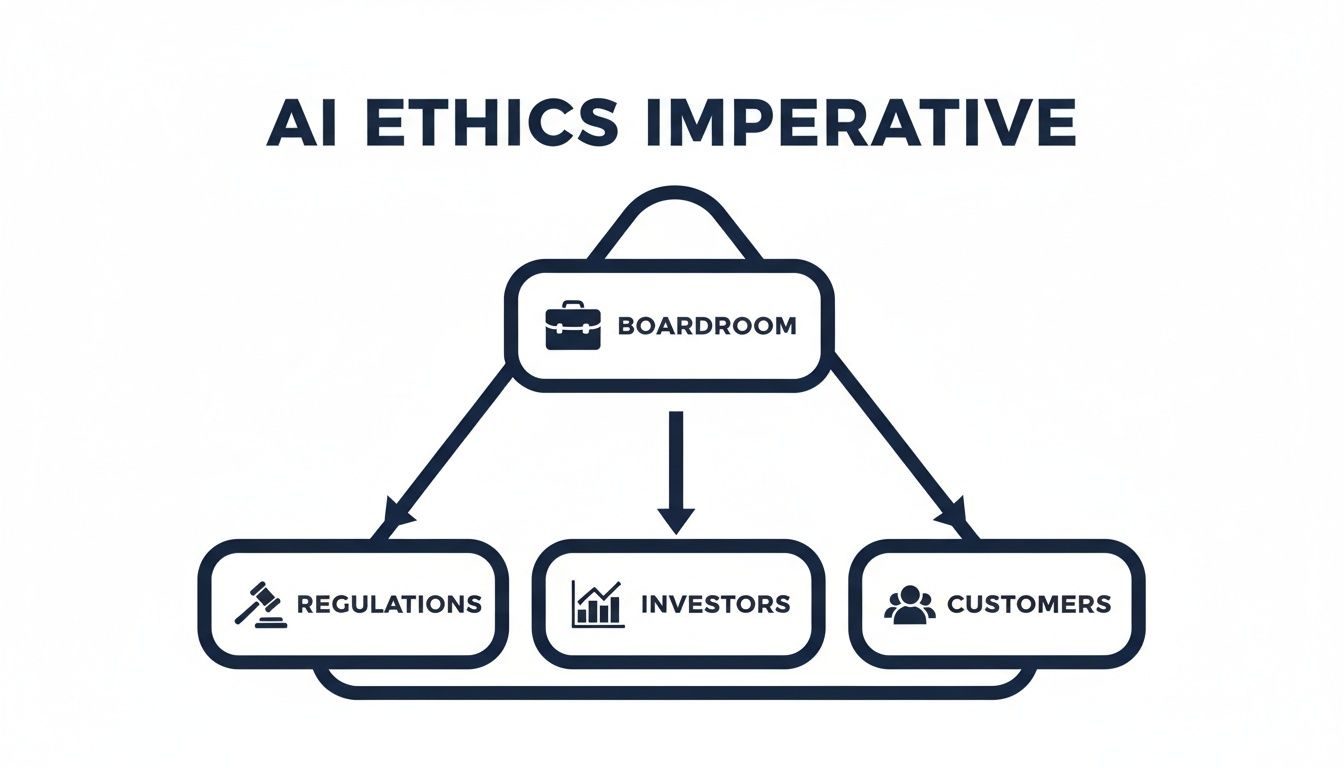

The push for this kind of structured governance is coming from the top. Boardroom decisions are now heavily influenced by a mix of regulatory pressure, investor expectations, and, of course, customer demands.

As this shows, accountability starts in the boardroom, which has to answer directly to regulators, investors, and the market itself.

Proactive Risk Assessment

The first pillar is all about proactive risk assessment. This isn't damage control; it's about spotting potential harm before it can ever happen. You have to dive deep into your AI models and the data they consume to sniff out hidden biases, privacy issues, and the potential for unfair outcomes. It’s about asking the tough "what if" questions to really stress-test your systems.

Practical Example: Algorithmic Bias in Logistics

Imagine a logistics company using an AI to map out delivery routes. A proactive assessment might find that the algorithm, trained on old data, is accidentally creating service gaps in lower-income areas simply because they had fewer deliveries in the past. Catching this early allows the company to retrain the model with better data, ensuring fair service and dodging a potential PR nightmare.

Key Takeaway: A proactive mindset shifts risk management from a reactive, crisis-response mode into a strategic, forward-looking process. It’s about protecting the business by finding and fixing flaws before they ever see the light of day.

Regulatory Mapping And Policy Development

Next up is regulatory mapping. The global AI legal scene is a messy patchwork of rules—think GDPR, CCPA, and the looming EU AI Act. This pillar is about mapping your AI work against these legal goalposts to make sure you’re compliant today and ready for what’s coming tomorrow. For many, this means getting serious about initiatives like AI Act readiness.

Once you know the external rules, you need to create your own internal ones. Actionable policy development means writing clear, no-nonsense guidelines for your teams on how to build, deploy, and manage AI. These aren't fluffy mission statements; they’re the practical rules of the road that guide everyday decisions.

To help clarify the external market, here's a quick look at some of the major regulations you'll encounter.

Mapping Major AI and Data Privacy Regulations

| Regulation | Geographic Scope | Primary Focus for AI Systems |

|---|---|---|

| EU AI Act | European Union | Classifies AI systems by risk (unacceptable, high, limited, minimal) and imposes strict requirements on high-risk applications, focusing on transparency, data quality, and human oversight. |

| GDPR | European Union | Governs all personal data processing. For AI, it impacts automated decision-making, requiring explainability and the right for individuals to contest algorithmic outcomes. |

| CCPA/CPRA | California, USA | Grants consumers rights over their personal data, including the right to know how it's used in automated decision-making and profiling. Requires clear disclosures. |

| PIPEDA | Canada | Governs the collection, use, and disclosure of personal information. Requires accountability for automated decision-making systems and clear explanations for their outcomes. |

This is just a snapshot, but it shows how different jurisdictions are tackling the same core issues: fairness, transparency, and accountability.

Continuous Auditing And Oversight

The final pillar is continuous auditing and oversight. An AI model isn't a "set it and forget it" asset; it learns and changes over time. This pillar is about setting up systems for ongoing monitoring to make sure models keep performing as they should and don't drift into bias or non-compliance.

This usually involves a cross-functional governance council and using tech for real-time monitoring. These constant checks are the only way to be sure your AI remains fair, compliant, and effective long after launch. It’s a non-negotiable part of any serious AI ethics and compliance consulting engagement.

The demand for this kind of specialized help is exploding. Recent data shows that demand for ethical AI and bias mitigation consulting services has shot up by 55%, a clear signal that the market is shifting toward transparency.

Impact Opportunity

These building blocks—risk assessment, regulatory mapping, clear policies, and continuous auditing—are the foundation of a resilient governance strategy. Getting them right is a crucial part of any successful AI enablement journey, ensuring your innovation is built on a foundation of trust and security.

What a Real AI Ethics Consulting Engagement Looks Like

Understanding the theory behind AI governance is one thing, but seeing it in action is something else entirely. So, what does an AI ethics and compliance consulting engagement actually look like on the ground?

It’s not about endless meetings or dusty theoretical reports. It’s a hands-on, structured partnership designed to build a real, durable system for responsible AI.

Practical Example: A B2B SaaS Company Journey

Imagine a mid-sized B2B SaaS company that knows it needs to get its AI governance in order to land bigger enterprise clients. They're just not sure where to start. This is the moment they bring in a specialized consulting partner, kicking off a multi-phase journey.

Phase 1: Discovery and Risk Audit

The engagement doesn't start with solutions—it starts with questions. Consultants begin by acting like detectives, mapping the company's entire AI ecosystem. This means identifying every single model in use or development, from the sales team’s lead-scoring algorithm to the customer support chatbot.

Once that inventory is complete, the risk audit begins. Each system is analyzed against established ethical benchmarks and fast-moving regulatory requirements.

- Key Question: Where are the hidden risks? Is the sales AI unintentionally biased against certain demographics? Does the chatbot store sensitive user data in a non-compliant way?

- Key Deliverable: The main output here is a risk heat map. This is a powerful visual tool that clearly identifies and prioritizes AI-related risks, showing leadership exactly which systems need immediate attention.

This initial audit provides the clarity needed to make every subsequent step count.

Phase 2: Framework Design and Policy Co-Creation

With a clear picture of the risks, it's time to build the solution. This is a deeply collaborative stage. Consultants work side-by-side with the company’s internal teams—legal, IT, product, and leadership—to co-create a tailored AI governance framework.

This isn't some generic, off-the-shelf template. It’s custom-built to fit the company's operational realities and strategic goals.

This phase is all about turning abstract principles into concrete rules. The team develops actionable internal policies for data handling, model transparency, and human oversight. A key activity here is creating model transparency cards, which are like nutrition labels for AI, detailing a model’s purpose, performance, and limitations for everyone to see.

Key Takeaway: An effective AI governance framework isn’t forced on a company from the outside. It’s co-created with your team to ensure it’s practical, widely adopted, and built to last—directly addressing the real risks found in the audit.

Phase 3: Technical Implementation and Workflow Integration

This is where the framework jumps off the page and into practice. Consultants work with the company’s tech teams to embed the new governance controls directly into existing workflows and technology. The goal is to make compliance the path of least resistance, not another box to check.

This could mean configuring their CRM to flag high-risk automated decisions for human review. Or it might involve deploying software that automatically monitors algorithms for performance drift and bias. The focus is on seamless integration, making sure the ethical guardrails are woven into the operational fabric of the business.

Building your team's understanding of these systems is a huge part of this. You can learn more about how to assess your team's AI Quotient here.

Phase 4: Ongoing Monitoring and Optimization

AI governance isn't a one-and-done project. It’s an ongoing discipline. The final phase establishes the systems for continuous oversight and improvement.

Consultants help set up compliance dashboards that give leadership a real-time, at-a-glance view of the health and performance of their AI systems. These dashboards track key metrics for fairness, accuracy, and regulatory adherence, allowing the company to spot and fix issues before they become problems.

Over time, the consultant's role often shifts to periodic reviews and advisory, ensuring the company can manage its framework independently while staying ahead of new regulations and emerging tech.

The market for this hands-on guidance is exploding. The Responsible AI Governance Consulting market is projected to jump from $0.29 billion in 2024 to $0.44 billion in 2025—that's a staggering 49.5% compound annual growth rate (CAGR), as noted by The Business Research Company.

Impact Opportunity

By following this phased approach, an abstract goal like "becoming ethically compliant" becomes a concrete, manageable project. This roadmap delivers a clear ROI, de-risking innovation and turning responsible AI from a potential liability into a powerful competitive advantage that builds deep trust with customers and stakeholders.

How to Choose the Right AI Ethics and Compliance Partner

Picking the right partner for AI ethics and compliance is one of the most important calls you’ll make. Get it wrong, and you’re stuck with a binder full of academic theory that has zero connection to your business reality. But get it right, and that partner becomes a strategic asset, helping you navigate tricky regulations while carving out a real competitive advantage.

This isn’t about falling for flashy presentations or buzzwords. You need a team that gets it: responsible AI isn't just a legal or technical headache—it's a business imperative. The best consultants move past the theoretical chatter and get down to delivering tangible results that protect your company and help it grow.

Look for a Focus on Business Outcomes

Here’s your first filter: does a potential partner talk about business results or do they sound like a college professor? While they need to know the theory, their main focus should be on turning good governance into measurable value. That means connecting an ethical framework directly to your commercial goals, whether that’s cutting customer churn, lowering financial risk, or grabbing more market share.

A partner who is truly obsessed with outcomes asks entirely different questions. They won’t just ask, "Is your algorithm biased?" Instead, they’ll ask, "How could this model's bias torpedo our Q3 sales pipeline, and what’s the dollar-figure risk?" It’s a subtle shift, but it’s everything. It’s what separates the theorists from the strategists who can actually deliver an ROI.

Key Takeaway: The best AI ethics and compliance consulting firms aren't academics in disguise. They are strategists who anchor every recommendation in your business objectives, making sure every policy and control strengthens your position in the market.

Prioritize Practical Tech Stack Experience

An ethics framework is completely useless if you can’t actually build it into your tech. A top-tier partner needs to have proven, hands-on experience weaving ethical guardrails and compliance checks directly into commercial tech stacks—think CRMs, marketing automation platforms, and your own proprietary software.

You have to push for specifics here. Ask them directly:

- How did you build human-in-the-loop oversight into a sales model running on Salesforce?

- Can you show us a compliance dashboard you built for a system on AWS?

- Walk me through a time you operationalized a fairness audit inside an existing product workflow.

How they answer tells you everything. If they give you concrete details, they can execute. If they waffle, they can only write reports. Without that practical, in-the-weeds experience, you’ll end up with a brilliant strategy that your team has no clue how to implement.

Evaluate Their Methodology and Partnership Model

Finally, you need to dig into their process and what they consider a successful engagement. A transparent, battle-tested methodology isn't a nice-to-have; it's essential. They should be able to clearly walk you through their entire process—from discovery and risk assessment to implementation and ongoing monitoring—with firm deliverables and timelines for each stage.

Practical Example: A Strong Partnership in FinTech

Think about a B2B FinTech company building a new AI-powered sales forecasting tool. They didn't just hire a consultant who handed them a report on bias. Instead, they found a partner who co-created a "Compliance by Design" workflow right alongside their developers. Every new feature was stress-tested for fairness and regulatory alignment before a single line of code was finalized. The outcome? A fully compliant sales AI that not only sailed through regulatory checks but also delivered 15% more accurate forecasts, giving a direct boost to revenue.

Ultimately, the real goal of an AI ethics and compliance consulting engagement is to make your own team self-sufficient. Look for a partner whose mission is to enable your people with the knowledge, tools, and processes to own AI governance long after the project wraps. That focus on enablement is what turns a short-term project into a true long-term investment.

Impact Opportunity

enabling executives to ask these tough, practical questions is the key to confidently selecting a partner. By moving beyond surface-level criteria, you can find a firm that will deliver not just compliance, but a durable competitive edge built on trust and responsibility.

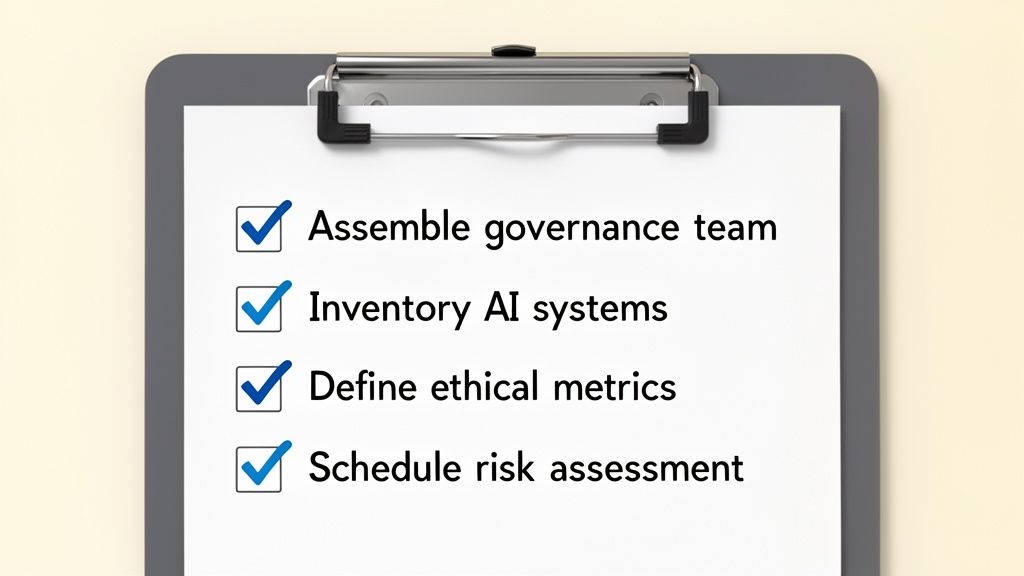

Your Executive Checklist for Implementing Responsible AI

It’s one thing to understand the principles of AI governance, but actually putting them into practice is another challenge entirely. For busy executives, the big question is often, "Where do I even start?" This checklist is designed to cut through the noise and give you an immediate, actionable game plan.

These aren't just theoretical exercises. They are concrete steps you can delegate, track, and build upon. The goal here is to create a durable foundation for AI ethics and compliance in your organization, turning abstract knowledge into real operational controls.

Assemble Your Governance Team

First things first: you need a cross-functional AI governance team. This is not a job for a single department. To get this right, you have to bring together people from legal, IT, product development, and senior leadership. This ensures you’re looking at risks and opportunities from every possible angle.

This group will be the champion for the entire initiative. They’ll write the policies, oversee the rollout, and make sure decisions aren't being made in a silo. Their combined expertise is what allows you to balance technical possibilities with legal guardrails and core business goals.

Key Takeaway: A dedicated, multi-disciplinary governance team is the engine of any successful responsible AI program. It establishes clear ownership and accountability from day one.

Conduct a Comprehensive AI Inventory

Let's be blunt: you can't govern what you don't know you have. Your next move is to map out every single AI and machine learning system being used or developed across the company. This means everything—from the third-party marketing tools your team loves to the proprietary algorithms your data scientists are building.

This inventory acts as your baseline, giving you a clear picture of your company's AI footprint. The objective is to document each system’s purpose, the data it uses, and the real-world impact of its decisions.

Practical Example: Uncovering "Shadow AI"

A B2B software company might run an inventory and discover its sales team is using an unsanctioned AI tool to generate outreach emails. This process brings that "shadow AI" into the light, letting the governance team assess its compliance and security risks before it creates a headache.

Define Your Success Metrics

What does a "win" look like for ethical AI in your business? Vague goals will get you nowhere. Your team needs to lock in three to five key performance indicators (KPIs) to measure how effective your governance framework truly is.

These metrics have to be specific and measurable. They’re how you turn abstract principles like "fairness" into concrete targets you can track month after month.

Key metrics could include:

- Bias Detection Rate: The percentage of models audited quarterly that show a reduction in demographic bias.

- Transparency Score: A score based on the percentage of high-risk models with completed and published model cards.

- Human Oversight Triggers: The number of automated decisions flagged for mandatory human review per week.

Schedule an Initial Risk Assessment

Okay, you've got your team, your inventory, and your metrics. The single most impactful next step you can take is to schedule an initial risk assessment with a strategic partner. An expert in AI ethics and compliance consulting can hit the accelerator on your progress, bringing a battle-tested methodology to pinpoint your biggest vulnerabilities.

This kind of engagement gives you an objective, third-party perspective that validates your internal work and, just as importantly, uncovers blind spots. The result is a clear, prioritized roadmap that lets you focus your time and money on the risks that actually matter to your business.

Impact Opportunity

This checklist provides a clear, immediate path forward. By taking these four steps, you move beyond discussion and into decisive action. You enable your organization to build a responsible AI framework that not only ensures compliance but also builds trust, strengthens your brand, and unlocks sustainable growth with confidence and clarity.

Frequently Asked Questions About AI Ethics Consulting

As leaders start to dig into AI governance, a lot of the same questions come up. Here are some straight answers to the most common ones we hear about AI ethics and compliance consulting, designed to cut through the noise and give you a clear path forward.

How Does AI Ethics Consulting Directly Impact My Company's ROI?

Let's be clear: AI ethics consulting isn't a cost center. It’s an investment in sustainable growth that drives ROI by heading off catastrophic risks, building unshakable brand trust, and making your operations sharper.

Practical Example: Avoiding a Hiring Lawsuit

What happens if you catch and remove a subtle bias in your automated hiring tool? You’ve just avoided a multi-million dollar discrimination lawsuit. More than that, you've protected your company from the kind of reputational hit that makes top talent run the other way for years.

Key Takeaway: When you build systems that are fair and transparent, you earn customer loyalty that lasts. A well-governed AI is simply more reliable, which means it integrates better and delivers stronger results in the parts of your business that actually make money.

Is AI Ethics Only a Concern for Large Enterprises?

Not at all. This is a critical issue for companies of any size. For a mid-market or growth-stage business, a single ethical blind spot or compliance failure can be a complete game-over. One data breach or a biased algorithm going public can stop your momentum cold and shatter the trust you’ve worked so hard to build.

Impact Opportunity

Smaller companies have a huge opportunity here. A rock-solid ethical framework can become a massive competitive advantage, helping you land enterprise clients who have sky-high standards for their partners. It's also a heck of a lot cheaper to build responsible AI from the ground up than to tear apart a scaled system to fix fundamental flaws later on.

What Is the First Practical Step to Address AI Ethics?

The single most effective first move you can make is a full AI system inventory combined with a high-level risk assessment. You can't govern what you don't know you have.

This means finding every single AI tool and model being used or developed across your entire organization—from the platform your marketing team uses to the dashboards in operations. Once you have that map, you can quickly evaluate each one for potential red flags like bias, privacy issues, or a total lack of transparency. This process gives you an immediate, real-world baseline of your exposure and points you directly to your most urgent priorities.

Ready to turn AI risks into a competitive advantage? The team at Prometheus Agency specializes in building practical, outcome-focused AI governance frameworks that protect your business and accelerate growth. Start with a complimentary Growth Audit and AI strategy session to map your path forward. Learn more about our AI enablement services at prometheusagency.co.